2020年9月8日—Generation.利用RNN產生一個有結構的物件(structuredobject),像是一首詩或是一篇文章。句子是由『Word』或『Character』所組成,假設你有一個中文 ...,2021年1月24日—要達成這個目的,就要引入注意力機制,也就是我們說的RNNseq2seq+attention。RNNse...

Attention in RNNs. Understanding the mechanism with a…

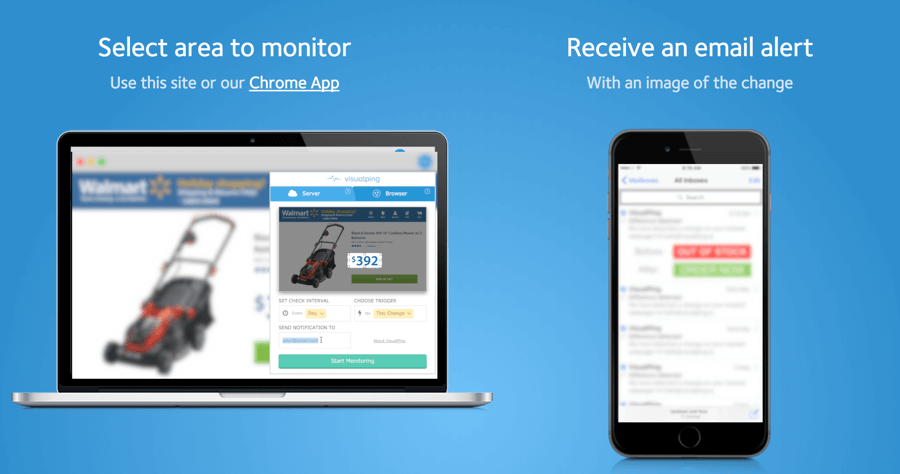

- chrome monitor

- neural network tutorial

- attention rnn

- distill pub

- activation atlas

- distill pub

- acrobat distiller 6 0

- Distill io app

- acrobat distiller 6 0 繁體

- attention rnn

- acrobat distiller 7 0 繁體中文版

- acrobat distiller 6 0

- adobe acrobat distiller

- distill research

- google notification setting

- distilling中文

- website monitoring

- deep learning blog

- distill web monitor教學

- attention colah

- wachete

- http distill pub 2016 misread tsne

- page monitor

- exploring neural networks with activation atlases

- distilled

AttentionisamechanismcombinedintheRNNallowingittofocusoncertainpartsoftheinputsequencewhenpredictingacertainpartoftheoutputsequence, ...

** 本站引用參考文章部分資訊,基於少量部分引用原則,為了避免造成過多外部連結,保留參考來源資訊而不直接連結,也請見諒 **